Context, Process, and Participant Response in the Implementation of Family Support Programmes in Spain

[El contexto, el proceso y la respuesta de los participantes en la implementaci├│n de programas de apoyo familiar en Espa├▒a]

Sonia Byrne1, Silvia López-Larrosa2, Juan C. Martín3, Enrique Callejas1, María L. Máiquez1, and María J. Rodrigo1

1Universidad de La Laguna, Tenerife, Spain; 2Universidade da Coru├▒a, A Coru├▒a, Spain; 3Universidad de Las Palmas de Gran Canaria, Gran Canaria, Spain

https://doi.org/10.5093/psed2022a8

Received 9 March 2022, Accepted 6 July 2022

Abstract

Implementation research addresses how well a programme is conducted when applied in real-world conditions. However, research based on quality standards is still scarce as it requires monitoring context, process, and participant response. This study applies implementation quality standards to 57 Spanish parenting and family support programmes identified in the COST European Family Support Network project, using an ten-component evaluation sheet sheet. Descriptive analyses showed a good implementation level. The latent profile analysis identified four patterns defined by programme setting: profile 1, Social Services/NGO setting (21.1%), profile 2, Health setting (31.6%), profile 3, Multi-setting (14%), and profile 4, Educational setting (33.3%), differing in professional discipline, training, participant response, and professional perception of implementation. Profile memberships were related to programme outcomes, scaling up, and sustainability. Findings illustrate conceptual and practical challenges that researchers and professionals usually encounter during implementation, and the efforts required to deliver programmes effectively in real-world settings in Spain.

Resumen

La investigación sobre implementación se ocupa de la calidad con la que se aplica un programa en condiciones del mundo real. Sin embargo, la investigación basada en patrones de calidad es aún escasa, ya que requiere supervisar el contexto, el proceso y la respuesta de los participantes. El presente estudio aplica los patrones de calidad a 57 programas españoles de apoyo parental y familiar identificados en el proyecto COST-European Family Support Network, enlos que se utilizó una hoja de evaluación de diez componentes. Los análisis descriptivos mostraron un buen nivel de implementación. El análisis de clases latentes detectó cuatro perfiles definidos por el entorno donde se aplica el programa: el perfil 1, contexto de los servicios sociales/ONG (21.1%), el perfil 2, contexto sanitario (31.6%), el perfil 3, diversos contextos (14%), y el perfil 4, entorno educativo (33.3%), que difieren en la disciplina del profesional, la formación, las respuestas de los participantes y la percepción que tiene el profesional sobre la implementación. La pertenencia a los diversos perfiles se relacionaba con los resultados del programa, su ampliación a gran escala y la sostenibilidad. Los resultados ponen de manifiesto los desafíos conceptuales y prácticos que tanto investigadores como profesionales suelen encontrar durante la implementación, así como los esfuerzos necesarios para aplicar los programas de forma efectiva en contextos reales en España.

Palabras clave

Programas basados en evidencia, Componentes de la implementaci├│n, Impacto de los programas, Patrones de calidad, Parentalidad positivaKeywords

Evidence-based programmes, Implementation components, Programme impact, Quality standards, Positive parentingCite this article as: Byrne, S., López-Larrosa, S., Martín, J. C., Callejas, E., Máiquez, M. L., & Rodrigo, M. J. (2023). Context, Process, and Participant Response in the Implementation of Family Support Programmes in Spain. Psicolog├şa Educativa, 29(1), 25 - 33. https://doi.org/10.5093/psed2022a8

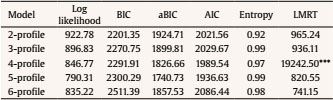

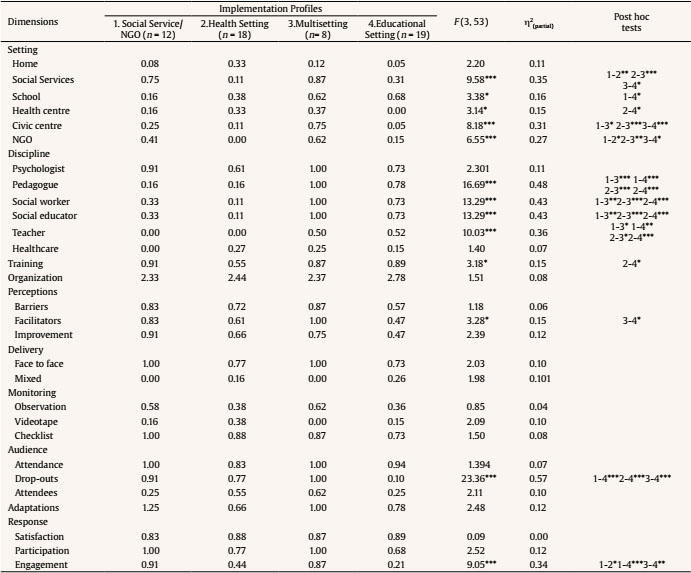

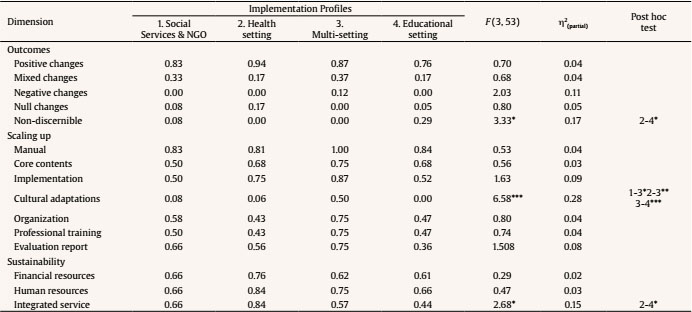

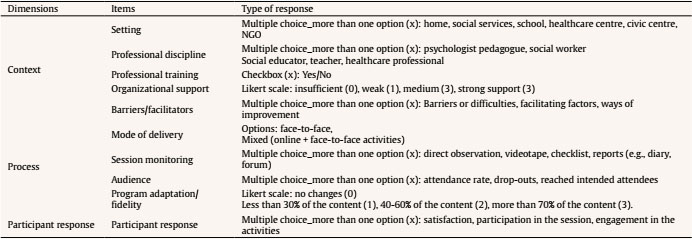

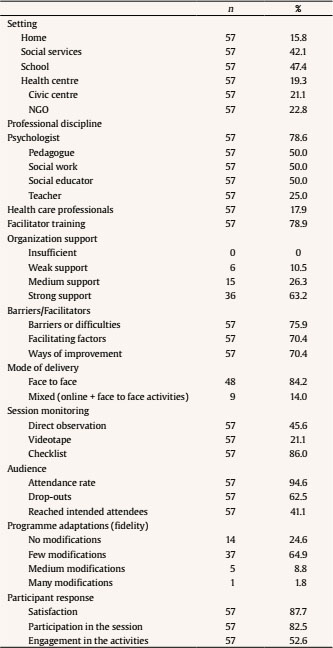

Correspondence: sbyrne@ull.edu.es (S. Byrne).Family is a main learning environment for both children and parents (Laosa & Sigel, 1982; Lehrl et al., 2020; López-Larrosa, 2001; Sanders et al., 2017). On the one hand, the family is intended to be a preventive and protective environment for the proper educational, social-emotional, and physical development of children (López-Larrosa & Escudero, 2003). On the other hand, parents learn to become parents in the family, a complex task that entails a set of competences and skills, among others, educating, nurturing, protecting, guiding, stimulating, monitoring, accepting, qualifying, and socially connecting their children in order to assure their wellbeing (Bradley, 2007; Budd, 2005; Reder et al. 2003). In the past, the parenting task was considered an autonomous and private practice that parents learned through societal intergenerational processes. Nowadays, it is increasingly assumed that the parenting task can also be learned through explicit and purposeful training to build the skills and resources that better equip parents to carry out their task (Daly, 2013). On these grounds, positive parenting policies across Europe (Consejo de Europa, 2006; Rodrigo et al., 2016) assume that government institutions must create the conditions to support parents and families embracing a supportive and proactive role that prioritizes the parenting task and then establishes a partnership with parents and families. Paying attention to parents and families, as a means to improve children’s lives in dimensions such as education, health or emotional development, to prevent future difficulties or protect them from current harm, has driven different stakeholders (politicians, organizations, professionals) to take actions in order to develop and evaluate parenting and family programmes (de Paúl, 2012; Rodrigo et al., 2016; Sanders et al., 2017; Whitcombe-Dobbs & Tarren-Sweeney, 2019; Zuchowski et al., 2019). These programmes address the promotion of parental competences in order to benefit children but, while doing so, they have an impact on the parents, the family system, and ultimately in the whole society in terms of improved mental health, improved social and educational services or in terms of economic return (Arruabarrena & de Paúl, 2012; Bennet, 2013; Nystrand, 2020; Rodrigo et al., 2015; Sujan & Eckenrode, 2017). Standards of Programme Implementation The growing recognition of the importance of developing parenting programmes has been accompanied by the claim that resources should be devoted to evidence-based interventions (Flay et al., 2005; Powers et al., 2015; Rodrigo et al., 2016; Temcheff et al., 2018). Programme implementation has been identified as a key dimension to be addressed in evidence-based research among the standards for evidence in prevention science (Flay et al., 2005; Gottfredson et al., 2015). Programmes are first developed and implemented under optimal conditions such as well-trained and supervised staff and convenience samples. The efficacy of a programme has to do with its positive effects under such optimal conditions. In turn, effectiveness refers to the effects of a programme that has been implemented under real-world conditions (Flay et al., 2005). In efficacy trials, it is desirable to measure the level in which a programme is implemented and the level of implementation that produces a reported effect, together with the measurement of the implementation in the control conditions. In effectiveness trials, researchers should necessarily comply with efficacy standards but also with effectiveness standards. These standards refer to identifying the level in which the programme has been implemented under real-world conditions and the integrity with which it has been applied. Also, the engagement, involvement, or acceptance of the participants should be reported. There must be manuals and proper training that other professionals may use to apply the programmes, and indications about the implications of outcomes to professional practice and to whom results can apply. Gottfredson et al. (2015) set that the quality and quantity of implementation must be measured and reported in efficacy trials, meaning the precursors of implementation such as staff qualification or training, the level and integrity of implementation, and the engagement of the participants. In effectiveness trials, the fidelity and quality of implementation under real-world conditions must be compared to that achieved in efficacy trials. Complexity of Implementation Research Implementation research refers to the study of how well a programme is conducted and how it works when applied in real-world conditions (Durlak, 2015a; Goldstein & Olswang, 2017). The aim is to identify the ingredients of successful interventions in order to improve the lives of those who are served by these programmes. Implementation research does also help to match the needs of children and families with the most effective programmes (Durlak, 2015a; Goldstein & Olswang, 2017; Powers et al., 2015; Sujan & Eckenrode, 2017). Implementation research applies to preventive and treatment interventions whether the service or programme happens in education services, mental or physical health services, or social services, and serves any type of participants (Durlak, 2015a). According to Peters et. al (2013), the challenge of implementation research is to work with real beneficiaries of interventions in their proper contexts instead of convenience samples. This makes implementation research a complex endeavour with many aspects that need to be addressed (Durlak & DuPre, 2008). In order to illustrate these complexities, we discuss them from the conceptual, methodological, and economic perspectives. The conceptual perspective comprises difficulties in setting terms and models. There is a lack of consensus in the vocabulary and in the operational definition of terms that lead to uncertainty about what has been measured in order to report results. Researchers may inform about supposed different components because they are named differently while other researchers considered them as similar components, all because terms do not have the same operational definition (Durlak & DuPre, 2008; Meyers et al., 2012). Another conceptual difficulty arises from the existence of different models of implementation (Berkel et al., 2011; Damschroder et al., 2009; Durlak & DuPre, 2008). These models emphasize the relevance of considering multiple dimensions when addressing implementation, which at least refer to the programme itself, the context, the process, and the participants (Durlak & DuPre, 2008; Peters et al., 2013; Pinto et al., 2021). However, there is a lack of consensus on which the key components that should be examined in implementation research in general and specifically in parenting programmes are, although several components are recurrently mentioned (Durlak & DuPre, 2008). Following Durlak and DuPre (2008) there are eight core components: fidelity, dosage, quality of delivery, participant responsiveness, programme uniqueness, monitoring, programme population reach (participation rates, programme scope), and programme modifications. Based on the previous model, Berkel et al. (2011) proposed a functional model that relates facilitators’ behaviours (fidelity, quality of delivery, and programme adaptation) to the responsiveness of the participants, which ultimately relate to the programme outcomes. Fidelity has to do with adherence to the programme model, the content or dosage of sessions. Quality of delivery has a broad definition and refers to the professionals’ skills to unfold the sessions and to create a supportive environment. Programme adaptation refers to the changes that are made to the programme. Participants’ response to the programme refer to sessions’ attendance, active involvement and engagement in the sessions, and degree of satisfaction with the programme. From the methodological perspective, the wealth of methods and data collection approaches to monitor professionals and institutions/services in real world conditions is noteworthy. But monitoring is in itself a difficult task in real world conditions and furthermore it should be sustained over time (Durlak, 2015a; Peters et al., 2013; Powers et al., 2015; Stern et al., 2008). Research methods can be qualitative, quantitative, or mixed. For data collection, implementation researchers can use a variety of procedures and instruments, for instance, surveys, checklists, observations, focus groups, or interviews, among others (Peters et al., 2013). However, the scarcity of reliable instruments and systematic procedures for evaluating the implementation process is also noteworthy (Durlak, 2015a; Peters et al., 2013). This implies an extra effort on the part of researchers. From the economic perspective, implementation research is costly since it requires allocating specific resources to evaluate the setting up and operation of programmes (Weegar et al., 2018). As resources are usually limited, investing in implementation evaluation implies that other needs are not financed, or extra money is required to support assessment. This is a hard decision to be made for services and institutions. As a result, services, institutions, and professionals may not see the need to support implementation research (Durlak & DuPre, 2008; Weegar et al., 2018). However, research has shown that poorly implemented programmes are highly costly, wasting money, resources, and time (Durlak, 2015b). The Present Study In this study, we aimed to examine the implementation of parenting and family support programmes operating in real-world conditions in Spain. These programmes have been implemented by several entities in education, healthcare, social, and community sectors. We built on a previous review undertaken in 2016 which evaluated the implementation process in seven Spanish programmes for parents, children, and families operating in several regions, including also a survey of the parenting programmes implemented in the Basque Autonomous Region (Álvarez et al., 2016; Amorós-Martí et al., 2016; Arranz et al., 2016; Hidalgo et al., 2016; Martínez-González et al., 2016; Orte et al., 2016; Rodríguez-Gutiérrez et al., 2016; Rodrigo, 2016; Suárez et al., 2016). Our first aim was to analyse the dimensions and components that can affect programme implementation. Inspired by Berkel et al. (2011), Durlak (2015a), Durlak and DuPre (2008), and Pinto et al. (2021), we proposed components belonging to the three dimensions: the context where the implementation takes place, the process of monitoring the intervention, and participants’ responses during the intervention. The definition and operationalization of our target components in each dimension is as follows. In the context dimension, “setting of delivery” is the place where the programme is implemented, for instance, family home, social service facilities, schools, health care services, civic centres, or NGO; “professional discipline and training” refer to the academic degree achieved by the facilitators and the specific training that is needed for them to implement the programme; “organizational support” refers to the sustenance from the agency to implement the programme (i.e., human resources, material resources, space, coffee break, etc); “barriers/facilitating factors” refer to professionals’ perception of implementation. In the process dimension, “mode of delivery” refers to the way the programme is presented to its targets, for instance, face to face or mixed (on-line and face to face); “session monitoring” refers to the methods used to record or assess each session of the programme, for instance, video recording, direct observation or checklists; “attendance and reached attendees” identify the number of sessions participants have attended and whether intended attendees have been reached or not; “adaptation/fidelity” refers to the extent to which the programme is modified during the sessions ranging from no modifications to many modifications. Participants’ response is a final dimension that includes the evaluation of participants’ “satisfaction” and their level of “engagement” and “participation” along the sessions. Our second aim was to analyse the variability in the associative patterns of the components belonging to the contextual, processual, and participants’ response dimensions, following a person/programme-centred approach (Bergman et al., 2003; Magnusson, 1998). Given that the components may be associated in different ways (Hickey et al., 2021), we tried to identify how these components related to each other yielding profiles that differ between them. Then, we examined how the programme impact considering programme outcomes, large scale replication, and sustainability was associated to these profiles, in order to further characterize them. We expected that better implementation would be related to better programme results (Durlak, 2015a; Durlak & DuPre, 2008). In sum, using the same set of implementation dimensions and components of the programmes would allow their comparison and the interpretation of results in terms of quality standards. Sampled Programmes Data collection took place from May 2020 to April 2021. It resulted in 57 programmes implemented in education, health care, social, and community sectors. Some average descriptors of the identified programmes indicated that they were fully manualized, their periodicity ranged from weekly to monthly and they targeted different populations, such as couples, parents, children, families, or communities. Inclusion and exclusion criteria for eligibility were as follows. The following inclusion criteria (all conditions had to be met) were considered: authorship (original and/or adaptations), theoretical background, number of sessions exceeding three, and having at least an available written report of the programme’s results, such as a white paper or a publication. Likewise, the following exclusion criteria (one of these conditions was enough to exclude the programme) were taken into account: the organization that delivered the programme was unidentified, the target population was adults unrelated to parenthood and family issues, and content and programme methodology were unknown. Instrument and Data Collection In order to collect the programmes’ information, a Data Collection Sheet (DCS) was created by EurofamNet members in accordance with international quality standards for family support programmes. The DCS included information referred to programmes’ identification, description, implementation, evaluation design, evaluation tools, and impact (see Rodrigo’s et al.’s [2022] introductory article in this special issue). This paper focusses on the part of the DCS that was designed based on the main recommended dimensions and components of implementation (Berkel et al, 2011; Durlak, 2015a; Durlak & DuPre, 2008; Pinto et al., 2021) described in the introduction. Table 1 shows the implementation dimensions (context, process, and participant responses), their corresponding components, and their respective response options. Procedure The programmes were identified by the Spanish Supportive Network in the context of the European Family Support Network project (EurofamNet), a COST project led by Spain aimed to inform family policies and practices, made up of entities at the national (e.g., National Childhood Observatory, National Union of Family Associations, UNAF, UNICEF Spain, Children’s Platform), regional (e.g., Cantabria government, Extremadura government, Andalusia government), and local (e.g., Social Rights and Services Department of the Region of Asturias) levels in several sectors, professional associations of Social Workers, Psychologists, Pedagogists, and Social Educators as well as experts from Spanish universities. Members of the Spanish Supportive Network located in different territories received a 5-hour training on how to address knowledgeable informants (e.g., coordinators and practitioners of child and family services) of the programmes that fulfilled the inclusion criteria. They were also informed about the content of the Data Collection Sheet and how to fill in responses on an editable pdf for each programme. They were also informed that they had to send the editable pdf to a single person who was responsible of storing the original data files and backing them up on the intranet of the website of EurofamNet (see the full catalogue of programmes in the Eurofamnet webpage, https://eurofamnet.eu/). Analysis Plan Analyses were conducted in three phases. First, we performed descriptive statistics (frequency and percentage) of implementation components and impact dimensions according to response options. Second, we analysed the variability in the associative patterns among contextual, processual, and participant response components, with a latent profile analysis (LPA) using MPlus, version 7 (Muthén & Muthén, 2012). LPA uses latent variables to identify groups of individuals/programmes with similar patterns of scores on a set of variables. Groups were determined through an iterative process where fit indexes revealed the presence of two-to-six class solutions (Nylund et al., 2007). The optimal number of profiles was chosen based on the lower values of several criteria (when k > 1): Akaike information criterion (AIC; Akaike, 1974), Bayesian information criterion (BIC; Schwartz, 1978), and sample size adjusted-BIC (ABIC; Sclove, 1987). Lo-Mendell-Rubin likelihood ratio test (LMR-LRT; Lo et al., 2001) determined the log likelihood difference test statistic to compare each model with k-1 and k-6 class models and provided the p-value to determine if there was statistical significance (typically α < .05). Finally, the entropy value was used to reveal the ability of the model to correctly classify programmes, with high values indicating more optimal classification. Although it is a small sample size, LPA was used following Wurpts and Geiser (2014) that considers that there are factors that can compensate for a lower sample size, such as using higher number and quality of indicators, that can – partially – offset the detrimental effects of a limited sample size. Finally, analyses of variance (ANOVA), using the profiles as independent variables, were performed to examine how impact factors (type of outcome, large scale replication and sustainability) were associated to the implementation profiles, using the SPSS software package v25. The effect size (ES) was explored using η2(partial) statistics (Cohen, 1988): η2 = .01 indicates a small effect, η2 = .06 indicates a medium effect, and η2 = .14 indicates a large effect. Ethical Considerations All the experts who participated in the study took part voluntarily after signing an informed consent form in accordance with the Declaration of Helsinki. This study was carried out in accordance with the European Cooperation in Science and Technology Association policy on inclusiveness and excellence, as written in the Memorandum of Understanding for the implementation of the COST Action “The European Family Support Network. A bottom-up, evidence-based and multidisciplinary approach” (EuroFam-Net) CA18123. Descriptive Analyses of Implementation Variables According to Table 2, context variables were characterized by programs mainly delivered face to face, primarily implemented by psychologists, who were specifically instructed about programme’s content and implementation procedures. Programmes received strong support from their agencies to be implemented and took place in several settings. In most cases, the process was monitored through checklists or reports, registering the attendance rate and with few modifications of the programme contents. Most of the programmes analysed participants’ responses using measures such as satisfaction and participation in the session, but not so much their engagement. Identifying Implementation Profiles The second step was to identify programmes with similar implementation patterns. The latent profile analysis (LPA) revealed that a 4-profile solution (Table 3) was the best-fitting model, due to its lower AIC, BIC, and ABIC, higher entropy and higher significantly LRMT values in comparison to a 2-profile, 3-profile, 5-profile, and 6-profile solution, which were rejected because of non-significant LMRT values. Table 3 Model Fit Indexes for the 2-6 Class Solution   Note. AIC: Akaike information criterion; BIC: Bayesian information criterion; ABIC: sample size-adjusted Bayesian information criterion; LMRT: Lo-Mendell-Rubin Test. ***p ≤ .001. The mean scores on the profile variables are shown in Table 4. One-way ANOVAs and post hoc Tukey’s tests were conducted to identify significant mean differences of the implementation variables. The model profiles were as follows (considering the relative value of the implementation scores across the four profiles). Profile 1 was labeled “social services and NGO settings” (n = 12, 21.1%) and was characterized by programmes delivered in social services and NGO, high levels of specific training in the programme content and operation, measures of participants’ dropouts, and engagement in the activities. Profile 2 was labeled “health setting” (n = 18, 31.6%) and was described by programmes delivered in health centres, low levels of specific training in the programme content and operation, and measures of participants’ drop-outs but not of engagement in the activities. Profile 3 was labeled “multisetting” (n = 8, 14 %) and was depicted by programmes delivered in social services, health services, civic centres, and NGOs. These programmes were run by many professional disciplines, with the exception of healthcare professionals, with high level of specific training, measures of participants’ drop-outs and engagement in the activities. Also, professionals reflected on facilitating factors. Profile 4 was labeled “educational setting” (n = 19, 33.3 %) and was portrayed by programmes delivered in schools and moderately in social services. They were led by pedagogues, social workers, social educators, and teachers, with high levels of specific training in the programme content and operation, and with less monitoring for drop-outs, for participants’ engagement in the activities and for implementation facilitating factors. Table 4 Mean Differences of the Implementation Dimensions for the Four-Class Model   *p ≤ .05, **p ≤ .01, ***p ≤ .001. Identifying Impact Dimensions Related to Implementation Profiles Implementation profiles showed significant relationships with the three impact variables: outcomes with non-discernible effects (type of outcome), cultural adaptations (large scale replication), and programme integrated into the service offering (sustainability) (see Table 5). Profile 1, social services and NGO settings, was not related to any impact factor. Profile 2, health setting, had high sustainability, integrating the programme into the service offering (sustainability). Profile 3, multisetting, had more cultural adaptations in order to facilitate scaling up (large scale replication). Profile 4, educational setting, was related to non-discernable outcome effects and low levels of programme integration into the service offering. Table 5 Mean Differences of the Implementation Profiles on the Impact Variables   *p ≤ .05, **p ≤ .01, ***p ≤ .001. This study examines a set of implementation dimensions related to the context, the process, and the participant responses and their respective components in a sample of 57 evidence-based Spanish programmes. This allowed a comparative assessment of implementation and the interpretation of results in terms of quality standards. Regarding our first descriptive objective, overall, programmes provided a quite complete account of the three implementation dimensions, according to the quality standards for effectiveness (Flay et al., 2005; Gottfredson et al., 2015). In the end, these programmes are the ones that met the previously established inclusion and exclusion criteria which at least fulfilled a basic level of quality assurance. However, some strengths and weaknesses were identified in each dimension. One strength of the context dimension was that the programmes were implemented in a variety of settings, such as social services, schools, health services, NGO, civic centres, and family homes, and were led by professionals from different disciplines. This illustrates good examples of intersectoral work with families, which is recommended by the World Health Organization, Regional Office for Europe (World Health Organization [WHO, 2020]). One weakness was that not all the programmes reported professional training and involved their professionals in a process of reflection that would facilitate the capture of emerging factors that contribute to effective implementation (Smith et al., 2020). One strength of the process dimension was that most programmes provided attendance rates and kept programme adaptations at minimum, preserving their fidelity (Gottfredson et al., 2015). One weakness was that the mode of delivery was mainly face-to-face with less use of the mixed modality (face to face and online), which means that information and communication technologies (ICT) were underused in family services, as it was suggested in a recent narrative review (Canário et al., 2022). But this may have changed due to the recent pandemic as it has already happened in family therapy (Lebow, 2021). Finally, one strength of the participant response dimension was that most programmes reported attendees’ satisfaction. One weakness was that fewer programmes reported about participant engagement. Engagement is a good indicator of the active methodology used (Rodrigo et al., 2010) and has been reported as a core ingredient of successful interventions equated to family alliance (Álvarez et al., 2020). Regarding our second goal, we first examined the profile of programme implementation components following a programme-centred approach (Bergman et al., 2003; Magnusson, 1998). Profile analyses showed that the components were associated in different ways, as it was previously suggested (Hickey et al., 2021). What was new here was the main organizer of the groups: the setting of the programmes. The four profiles mainly differed in professional discipline, training, participant response, and professional perception of implementation. The programmes in Profile 3, “multisetting” (14%), run in social services, health services, civic centres, and NGOs, were the best implemented, were led by many professional profiles, implementers were well trained, and monitored drop-out rates and participants’ engagement, and registered professionals’ appraisals of facilitating factors. Programmes in Profile 1, “social services/NGO settings” (21.1%), were in the intermediate case : they provide specific training in the programme and monitored drop-out rates and participants’ engagement. Programmes in Profile 2, “health setting” (31.6%), were also an intermediate case but with a relatively poorer quality than in Profile 1, since they had low levels of specific training and monitored participants’ drop-outs, but not participants’ engagement in the activities or professionals’ appraisal of the implementation process. Finally, programmes in Profile 4, “educational setting” (33.3%) in schools and moderately in social services, had more possibilities for improvement in terms of implementation. They were positively led by pedagogues, social workers, social educators, and teachers, and provided training, but did not control for drop-out cases, nor reported about participants’ engagement or implementation facilitating factors. On note, despite the profile solution being robust, there were several features that did not distinguish between profiles, such as psychologists, who are usually involved in all the profiles, the use of the face-to-face mode of delivery, the fact that there was good organizational support, the use of different techniques to monitor the sessions, the measurements of attendance rates, few adaptations, and the assessment of the participant satisfaction, all of which are positive assets that guarantee a good level of implementation according to the standards. A final comparison of the profile membership with some features of the programme impact (type of outcome, large scale replication and sustainability) confirmed the existence of relationships between the quality of the implementation and the results obtained, as it would be expected (Durlak, 2015a; Durlak & DuPre, 2008). Less well implemented programmes had fewer chances of being well evaluated, being ready to large scale replication and well-integrated into the service. The existence of non-discernible programme outcomes due to an inadequate evaluation is mainly limited to the Profile 4, “educational setting”, while non-discernible outcomes are almost non-existent in the other profiles. Cultural adaptations for large scale replication were more likely in Profile 3, “multisetting”, than in other profiles. Again, it seems that multisetting delivered programmes take the lead in quality assurance, while programmes delivered in educational settings, such as schools and social services, although already at a very good level, can be further improved to meet all quality standards. Besides, sustainability in terms of the programme being integrated in the service offering was less likely to be found in Profile 4, “educational setting”, and more likely in Profile 2, healthcare setting. This may indicate differences in the assessment orientation of those settings regarding family and parenting programmes, and emphasize the importance of integrating programmes into the services as a long-term investment. If the main goal is to support parents and families to better equip them to fulfil their varying tasks (Daly, 2013), a good coordination between services may offer families and parents the resources to satisfy their needs, then overcoming the limitations of those services already overwhelmed by varying demands. This study has several limitations that should be addressed in future studies. First, our data collection procedure is very sensitive to the diversity of territories and fields of application, but it does not guarantee that all the programmes that operate in Spain are included. Second, we rely on the assessment of those who are responsible of the data collection, which could bias their responses. However, the items in the survey are very factual and the responses can be checked against written reports and publications already available. Finally, the sample size is moderate considering the number of items to be covered for each programme. This may lead to type II errors by presenting a result as not significant due to lack of statistical power. In conclusion, our findings show that the average level of implementation of the programmes is quite good according to quality standards. This is notable given the general dearth of implementation research that needs to be addressed in real-world applications of the programmes. The programmes cover most of the implementation components, are manualized as they have handbooks or guides, are rigorous in monitoring procedures and well supported by the services, which means that they have managed to overcome most of the conceptual, methodological, and economic obstacles of implementation research. We have also shown that ways of improving programme implementation are related to where the programme is located. This may seem a trivial issue but, in fact, the setting is very diagnostic of the level of adoption of evidence-based professional practices. The setting relates to diversity in the culture of intervention, different forms of evaluation, and various professional disciplines that can configure the work with families. However, this heterogeneity far from being a drawback is an opportunity for additional improvement towards an integrated work with families, that are the ones that tend to visit all those settings and worry about the lack of coordination (Shapiro et al., 2012). The lesson learned from the present findings points to several recommendations. Firstly, it is important to include ICT programmes and expand this mode of delivery as it has proven to be very useful in times of crisis, providing another way to help families. Secondly, programmes are very suitable tools for intersectoral work, since they are based on promoting a similar set of parenting and family competences and these competences may be addressed in different sectors. Thirdly, the seemingly disturbing presence of professionals from different disciplines can be overcome by providing additional training in inter-professional competences combined with a common framework based on the positive parenting approach and consensual evidence-based practices. Finally, replicating evidence-based programmes and improving sustainability are good ways to build a strong prevention belt with the main goal of increasing families’ resilience in times of crisis. We hope that these recommendations will help the future development, evaluation, and faithful replication of parenting and family programmes in Spain. Conflict of Interest The authors of this article declare no conflict of interest. Cite this article as: Byrne, S., López-Larrosa, S., Martín, J. C., Callejas, E., Máiquez, M. L., & Rodrigo, M. J. (2022). Context, process, and participant response in the implementation of family support programmes in Spain. Psicología Educativa, 29(1), 25-33. https://doi.org/10.5093/psed2022a8 Funding: This article is based upon work from COST Action CA18123 European Family Support Network, supported by the European Cooperation in Science and Technology (COST). www.cost.eu. References |

Cite this article as: Byrne, S., López-Larrosa, S., Martín, J. C., Callejas, E., Máiquez, M. L., & Rodrigo, M. J. (2023). Context, Process, and Participant Response in the Implementation of Family Support Programmes in Spain. Psicolog├şa Educativa, 29(1), 25 - 33. https://doi.org/10.5093/psed2022a8

Correspondence: sbyrne@ull.edu.es (S. Byrne).Copyright © 2026. Colegio Oficial de la Psicología de Madrid

e-PUB

e-PUB CrossRef

CrossRef JATS

JATS